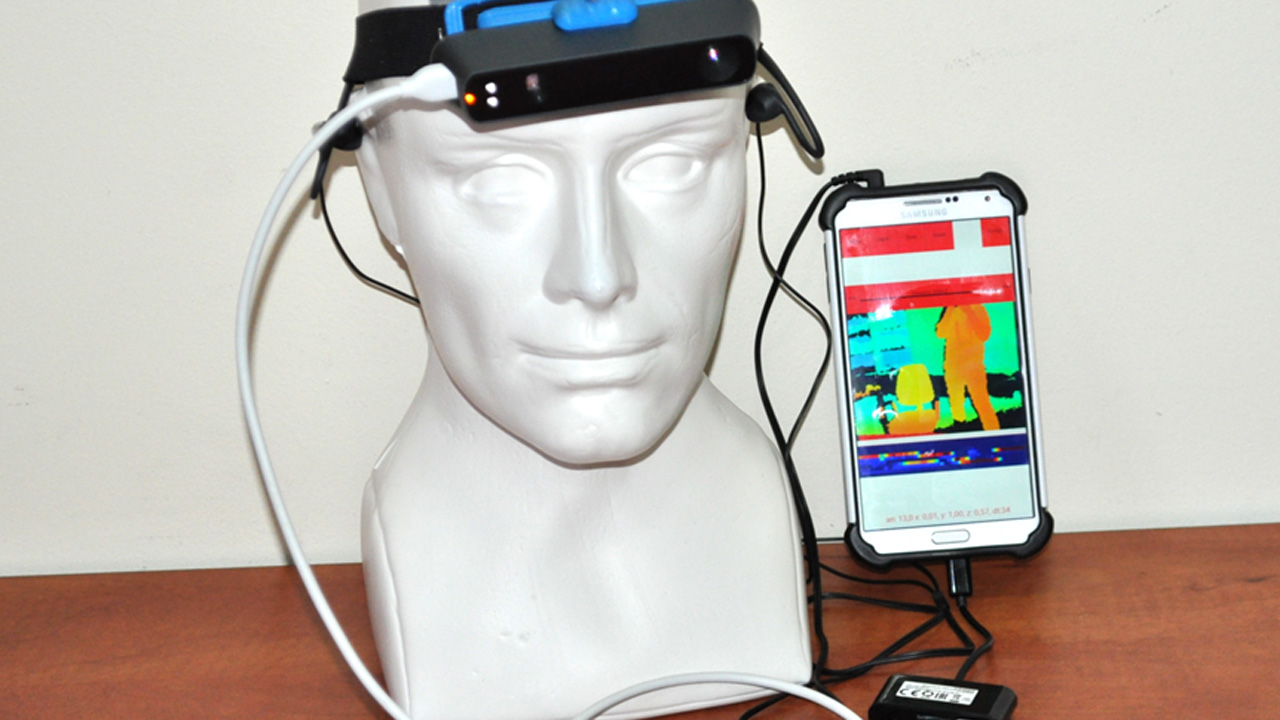

The camera embedded in the smart glasses is used to capture the image of the surroundings, which is analyzed using the Custom Vision Application Programming Interface (Vision API) from Azure Cognitive Services by Microsoft. This paper presents a system based on Google Glass designed to assist BVIP with scene recognition tasks, thereby using it as a visual assistant. Wearable technology has played a significant role in researching and evaluating systems developed for and with the BVIP community.

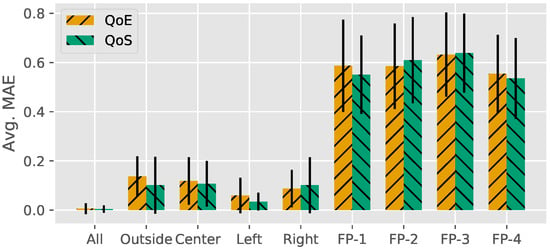

Critically, our study shows that there exists a tradeoff between the complexity of representation (number of visual words used to form the histogram) and classification accuracy by humans.Blind and Visually Impaired People (BVIP) are likely to experience difficulties with tasks that involve scene recognition. Our experiments demonstrate that humans are capable of successfully discriminating audio signatures associated to different visual categories (e.g., cars, phones) or object properties (front view, side view, far) following a short training procedure. The histogram is than directly converted into an audio signature using a suitable modulation scheme. In a BoW representation, an object category is modeled as a histogram of epitomic features (or visual words) that appear in the image and are created during an a-priori off-line learning phase. In this study we propose to obtain visual abstractions by using a popular representation in computer vision called bag-of-visual-words (BoW). This process leverages users' capabilities to learn and adapt to the auditory signatures over time.

These signatures provide a rich characterization of object in the surroundings and can be efficiently transmitted to the user. Rather than converting visual inputs into a list of object labels (e.g., "car", "phone") as traditional visual aids systems do, we conjecture a paradigm where visual abstractions are directly transformed into auditory signatures. Foremost, the ear is a much lower bandwidth interface than the optical nerves or a cortical interface (roughly 150k bps vs. The approach, while non-invasive, creates a number of research challenges. Visual sonification is the process of transforming visual data into sounds - a process which would non-invasively allow blind persons to distinguish different objects in their surroundings using their sense of hearing. In this research we propose to use visual sonification as a means to assist the visually impaired. The World Health Organization estimates that approximately 2.6% of the human population is visually impaired, with 0.6% being totally blind.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed